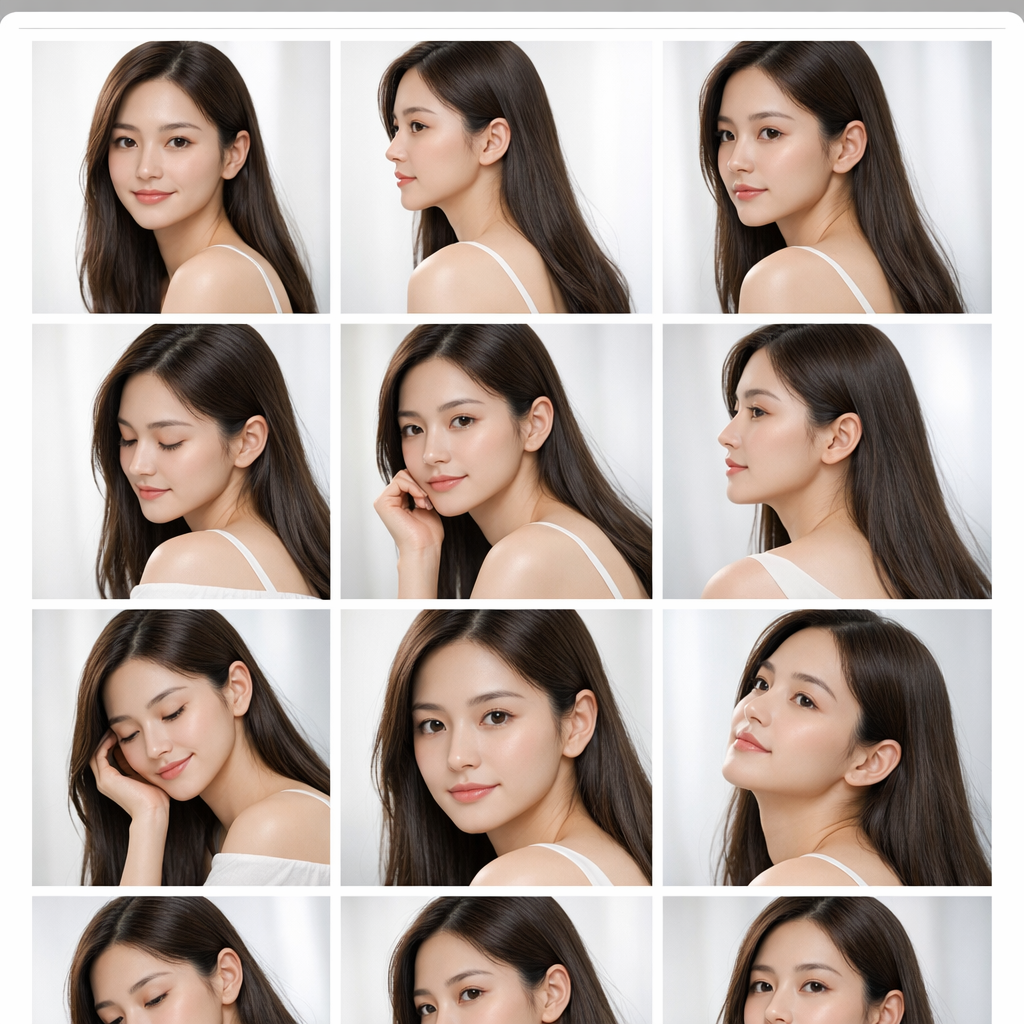

Train a LoRA for Consistent Characters

Turn a small reference set into a reusable character asset. Generate unlimited variations—new poses, outfits, scenes—while keeping the same face and personality.

10–30 images → 15–30 minutes → production-ready consistency.

Get started for free

The fastest path to character consistency

Key features that feel unfair

LoRA Training: custom character models for consistency that actually holds up in production.

What is LoRA Training?

LoRA (Low-Rank Adaptation) is a parameter-efficient fine-tuning technique that teaches an existing model new, specific knowledge without modifying millions of core weights. Instead, it adds small adapter layers—requiring dramatically less compute while achieving high fidelity. Think of it as training an AI artist to recognize and consistently recreate your character’s DNA: face proportions, expressions, accessories, and style cues. With just 10–30 curated images, you unlock unlimited consistent generations for manga panels, game assets, storyboards, and brand characters.

Get started for free

Why character consistency matters

In visual storytelling, consistency is the foundation of trust. When a character’s face shifts between scenes—hair length, eye shape, proportions—viewers feel the break. Standard text-to-image is non-deterministic: the same prompt produces a different identity every time. LoRA training solves this by encoding identity into a reusable model so your character stays recognizable across variations.

The science behind LoRA

Full fine-tuning rewrites a model’s core weights—expensive, brittle, and easy to overfit. LoRA keeps the base model intact and learns a low-rank update via lightweight adapters. You get targeted learning (identity, style, concept) with far fewer parameters, smaller files, and faster training—perfect for creator iteration loops.

How it works

Get started in minutes. No ML background required.

Small Dataset, Strong Identity

Teach the model who your character is. A curated reference set locks in the face and key design cues so every new generation still feels like the same person.

Start Creating NowIterate like a creator (not a lab)

Train, test, adjust, repeat. Dial identity strength, style adherence, and flexibility until the results match your production needs—manga pages, games, marketing, and series work.

Get started for free

Use cases

How creators use LoRA training to scale output without sacrificing identity.

Gallery

Examples of what you can create with LoRA training + Character Studio.

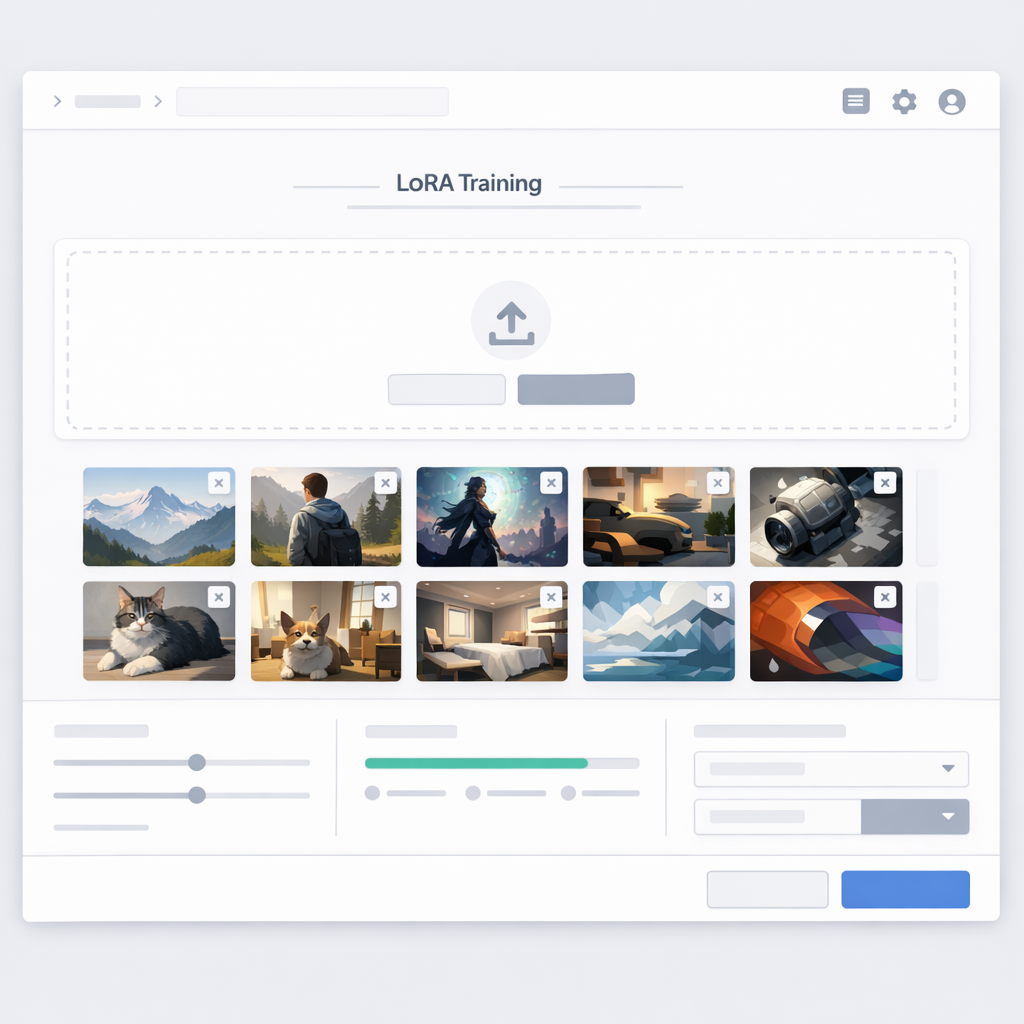

How LoRA Training Works

A practical workflow for consistent character generation.

Prepare a dataset

Collect images that represent the same identity across angles, expressions, and lighting. Clean inputs = better consistency.

Train the LoRA

Run a lightweight training job to learn the identity/style signal without overfitting.

Generate consistently

Use the trained LoRA in Character Studio to generate new scenes, outfits, and poses while keeping the face stable.

Why Train a LoRA

Consistency is what turns pretty images into a usable story pipeline.

Stable identity across scenes

Keep the same character face while changing backgrounds, camera angles, or outfits.

Faster iteration

Once the identity is captured, you spend less time re-rolling generations just to match the character.

Better series continuity

Useful for comics, manga, webtoons, thumbnails, and any multi-image storytelling workflow.

Creator-grade control

Train, test, adjust — aiming for repeatable production output rather than one-off demos.

Reusable assets

Treat a trained LoRA like a reusable character asset you can bring into new projects.

Works with Character Studio

Go straight into /new/characters to generate, refine, and keep building your roster.

Ready to Build Your Character Roster?

Train once, then generate forever—consistent faces across every scene.

Start Creating NowFrequently Asked Questions

How many images do I need for LoRA training?

Typically 10–30 curated images. Variety helps (angles, expressions, lighting), but identity must stay consistent. Clean data beats more data.

What makes a good dataset?

Clear subject, consistent identity, varied viewpoints, good lighting, and tight crops. Avoid mixed subjects, extreme filters, heavy occlusion, or wildly different styles in the same set.

Will the character stay consistent across outfits and scenes?

Yes—when trained well, LoRA helps preserve facial identity while you change prompts, outfits, environments, and lighting.

How long does LoRA training take?

Most runs complete in 15–30 minutes depending on dataset size and complexity.

What’s the difference between LoRA and full fine-tuning?

Full fine-tuning updates a large portion of the model’s weights (heavier compute, higher risk of overfitting). LoRA learns lightweight adapter layers (faster, smaller, easier to reuse).

Can I combine multiple LoRA models?

Often yes. Stacking a character LoRA with a style LoRA can unlock deeper control—keep identity stable while changing art direction.

Can I use LoRA models commercially?

You own what you create with LlamaGen. Please ensure your dataset and prompts respect third‑party rights and IP.

Where do I use the trained LoRA?

Open Character Studio at /new/characters and generate new images using the trained character identity.